Visualization and Performance

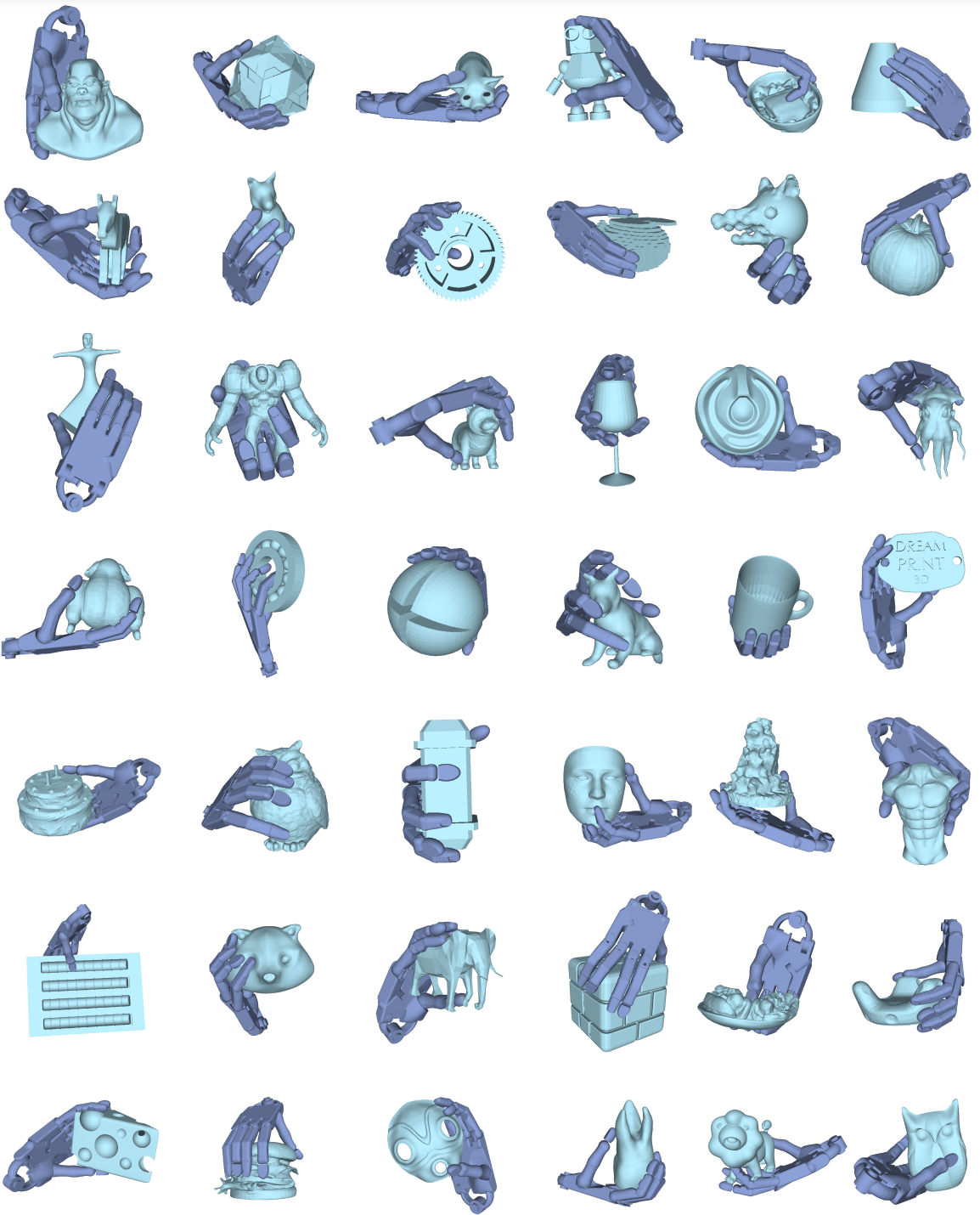

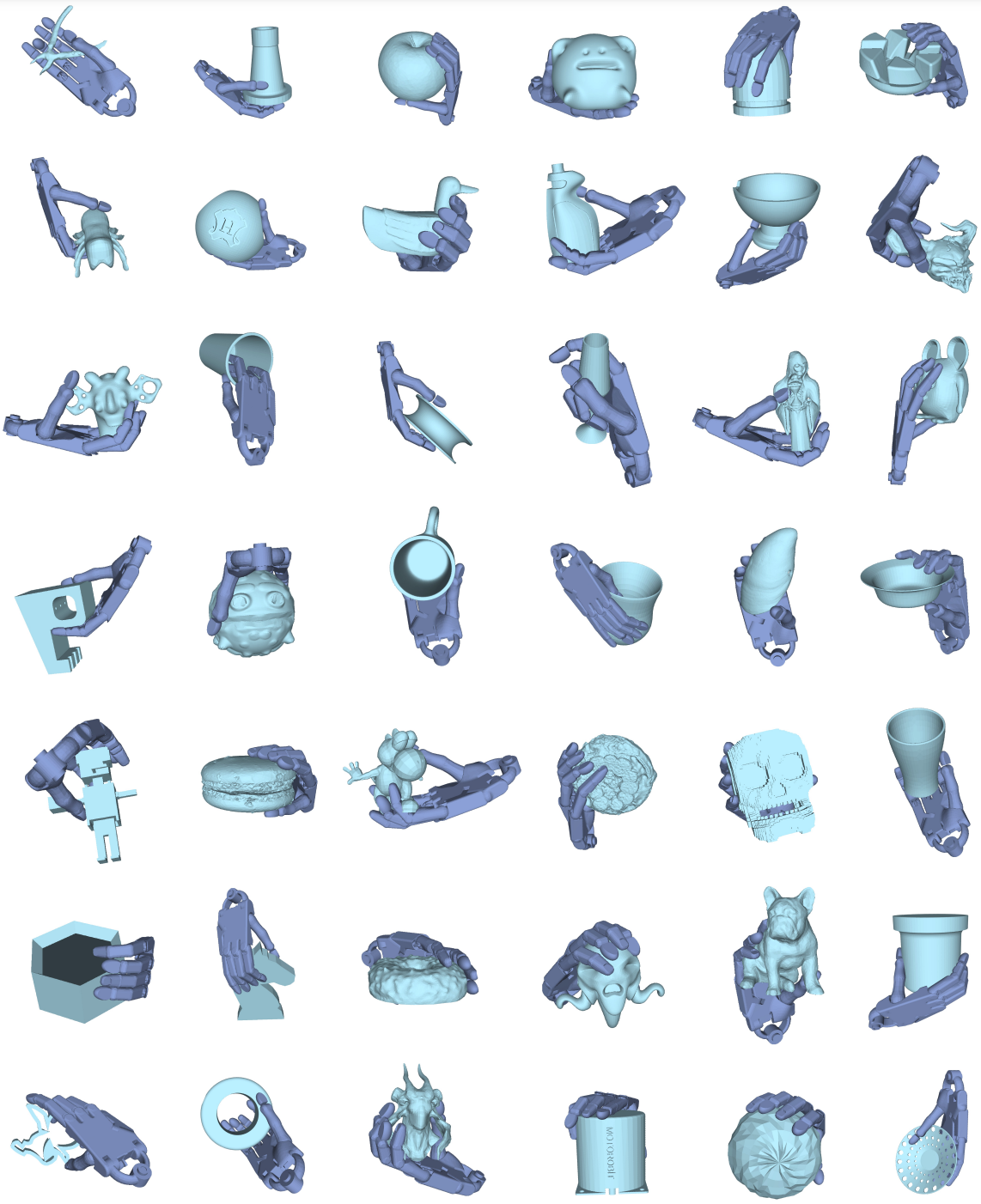

We present DexGrasp Anything, consistently surpassing previous dexterous grasping generation methods across five benchmarks. Visualization of our method's results are shown on the left.

In the first set of real-world experiments, we manually controlled the global position of the robotic hand in ROS RViz to move it up and down, highlighting the stability of the post-grasp motion. In the second set, we employed Inverse Kinematics (IK) to automate the motion planning.

A dexterous hand capable of grasping any object is essential for the development of general-purpose embodied intelligent robots. However, due to the high degree of freedom in dexterous hands and the vast diversity of objects, generating high-quality, usable grasping poses in a robust manner is a significant challenge. In this paper, we introduce DexGrasp Anything, a method that effectively integrates physical constraints into both the training and sampling phases of a diffusion-based generative model, achieving state-of-the-art performance across nearly all open datasets. Additionally, we present a new dexterous grasping dataset containing over 3.4 million diverse grasping poses for more than 15k different objects, demonstrating its potential to advance universal dexterous grasping.

We present DexGrasp Anything, consistently surpassing previous dexterous grasping generation methods across five benchmarks. Visualization of our method's results are shown on the left.

Overview of DexGrasp Anything. During training, object information is processed to extract combined semantic and spatial representations as conditioning inputs. At each noise training step, a clean-estimation of the noisy hand pose \( \hat{h}_0 \) is derived from the predicted noise \( \hat{\epsilon}_t \), with physics constraints guiding the noise distribution toward a cleaner, grasp-suitable distribution. During sampling, the Physics-Guided Sampler obtains the current observation \( \hat{h}_0 \) at each denoising step and performs posterior sampling based on this observation. Physical constraints gradually guide the distribution toward a physically feasible grasp configuration \( \hat{h}^*_0 \), enabling effective grasping of diverse objects.

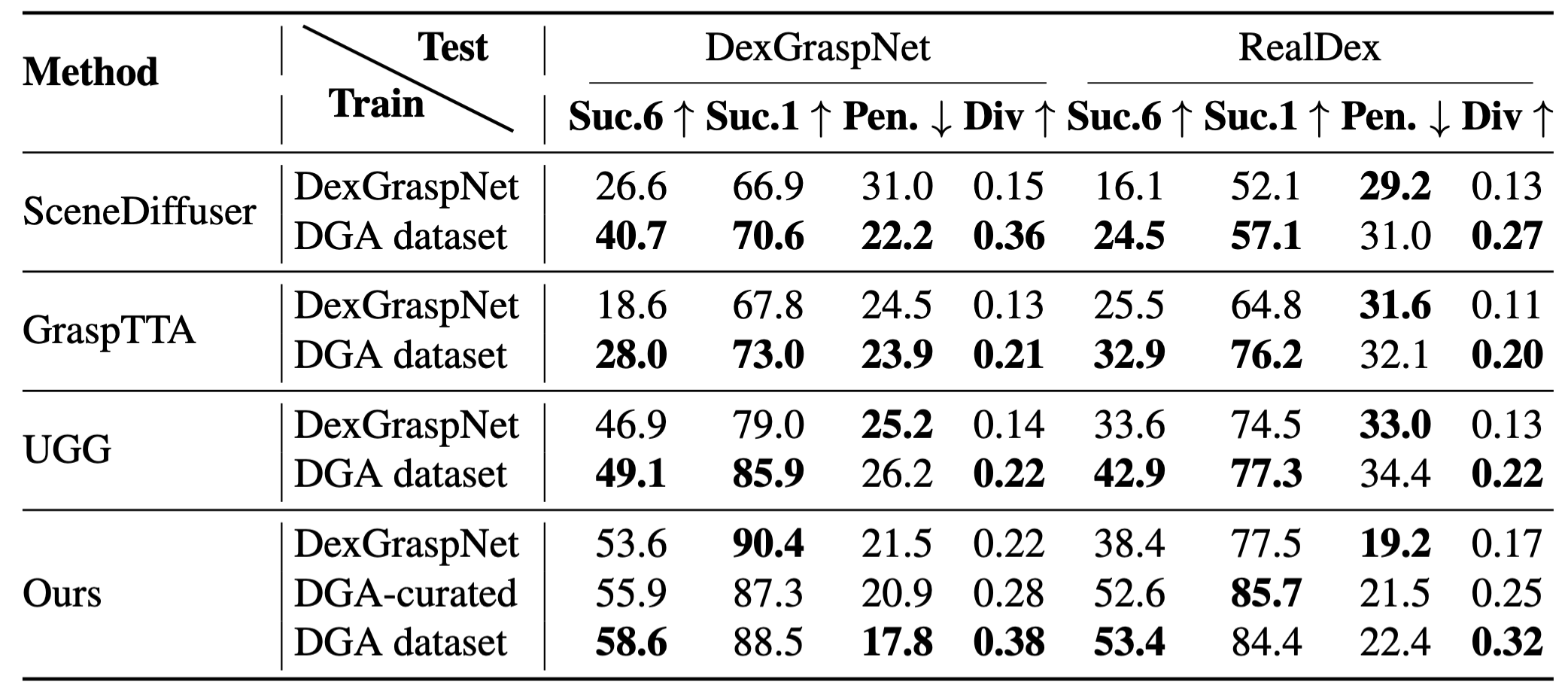

Performance comparison across different methods and datasets. Bold numbers indicate the best scores, while underlined numbers indicate the second-best scores. DexGrasp Anything (w/ LLM) achieves the highest or near-highest performance across most metrics.

Comparison of dexterous grasp datasets. Our dataset achieves the largest scale and covers the most diverse set of objects to date. This is particularly significant. (our dataset is currently the largest publicly available dataset for the shadow hand)

Evaluating Dataset Quality and Cross-Dataset Generalization. Model performance is compared on DexGraspNet and RealDex, with training on either DexGraspNet or our dataset. The best result within each group is highlighted in bold.

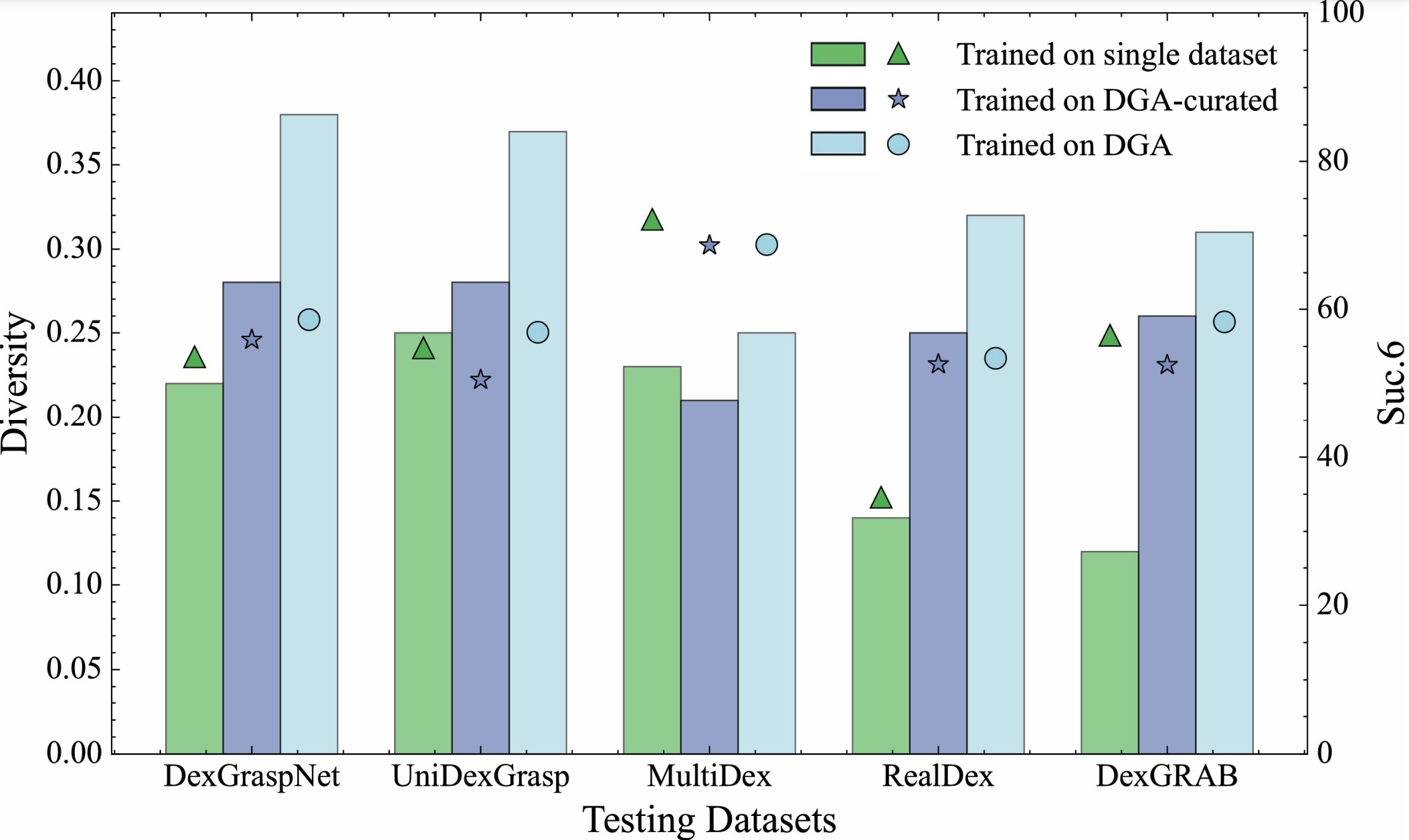

Comparison of diversity (bars) and all-direction grasp success rates (triangles/stars/circles) across models trained on different datasets. Trained on single dataset indicates models were trained on the same dataset they are tested on.

@article{zhong2025dexgrasp,

title={DexGrasp Anything: Towards Universal Robotic Dexterous Grasping with Physics Awareness},

author={Zhong, Yiming and Jiang, Qi and Yu, Jingyi and Ma, Yuexin},

journal={arXiv preprint arXiv:2503.08257},

year={2025}

}